We're really excited to launch our latest competition! In addition to an interesting, new prize structure, the subject matter is at the intersection of sustainability and industry. Improvements to these kinds of processes can have upside for both a business and the planet.

The presence of particles, bacteria, allergens, or other foreign material in a food or beverage product can put consumers at risk. Manufacturers put extra care into ensuring that equipment is properly cleaned between uses to avoid any contamination. At the same time, the cleaning processes require substantial resources in the form of time and cleaning supplies, which are often water and chemical mixtures (e.g. caustic soda, acid, etc.).

Given these concerns, the cleaning stations measure turbidity during the cleaning process. Turbidity quanitifies the suspended solids in the liquids that are coming out of the cleaning tank. The goal is to have those liquids be turbidity free, indicating that the equipment is fully clean. Depending on the expected level of turbidity, a cleaning station operator can either extend the final rinse (to eliminate remaining turbidity) or shorten it (saving time and water consumption).

The goal of this competition is to predict turbidity in the last rinsing phase in order to help minimize the use of water, energy and time, while ensuring high cleaning standards.

A Clean-In-Place system that is commonly used for cleaning in the food and beverage industry.

In this post, we'll walk through a very simple first pass model for predicting turbidity in the final rinse stage, showing you how to load the data, make some predictions, and then submit those predictions to the competition.

To get started, we import libraries for loading, manipulating, and visualizing the data.

%matplotlib inline

# mute warnings for this blog post

import warnings

warnings.filterwarnings("ignore")

from pathlib import Path

import numpy as np

import pandas as pd

pd.set_option("display.max_columns", 40)

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestRegressor

DATA_DIR = Path("../data/final/public")

Loading the data¶

On the data download page, we provide everything you need to get started:

Training Values: These are the features you'll use to train a model. There are 35 features in the data, including metadata on the cleaning process, phase, and object as well as time series data, sampled every 2 seconds. The time series measurements pertain to the monitoring and control of different cleaning process variables in both supply and return Clean-In-Place lines as well as in cleaning material tanks during the cleaning operations.

Training Labels: These are the labels. Every

process_idin the training values data has a correspondingfinal_rinse_total_turbidity_literlabel in this file.final_rinse_total_turbidity_literis defined as the total quantity of turbidity returned during the target time period multiplied by the outgoing flow during the final rinsing, for each cleaning process.Test Values: These are the features you'll use to make predictions after training a model. We don't give you the labels for these samples, it's up to you to generate turbidity predictions for the final rinsing phase of these processes.

Submission Format: This gives us the filenames and columns of our submission prediction, filled with all 1.0 as a baseline. Your submission to the leaderboard must be in this exact form (with different prediction values, of course) in order to be scored successfully!

# for training our model

train_values = pd.read_csv(

DATA_DIR / "train_values.csv", index_col=0, parse_dates=["timestamp"]

)

train_labels = pd.read_csv(DATA_DIR / "train_labels.csv", index_col=0)

Let's take a peek at our training features and the labels.

train_values.head()

train_values.dtypes

train_labels.head()

Explore the data¶

Let's get a better understanding of how the target variable is calculated by examining its components, return_turbidity and return_flow, over the target time period. The target time period is when we want to measure turbidity, and is indicated with the boolean column target_time_period. This is when we are in the final rinse and the return caustic and return acid valves have been closed for the last time.

For this exploration, we'll just look at a single cleaning process.

# subset to final rinse phase observations

final_phases = train_values[(train_values.target_time_period)]

# let's look at just one process

final_phase = final_phases[final_phases.process_id == 20017]

The target variable is calculated as follows for the final rinse phase: sum(max(0, return_flow) * return_turbidity).

# calculate target variable

final_phase = final_phase.assign(

target=np.maximum(final_phase.return_flow, 0) * final_phase.return_turbidity

)

Let's plot return flow, return turbidity, and the product of the two (turbidity measured in NTU.L) side by side.

# plot flow, turbidity, and target

fig, ax = plt.subplots(nrows=1, ncols=3, figsize=(20, 5))

ax[0].plot(final_phase.return_flow)

ax[0].set_title("Return flow in final phase")

ax[1].plot(final_phase.return_turbidity, c="orange")

ax[1].set_title("Return turbidity in final phase")

ax[2].plot(final_phase.target, c="green")

ax[2].set_title("Turbidity in final phase in NTU.L");

We sum over the final rinse phase to get the target value for this process, and confirm this matches the label for this process in train_labels.csv.

# sum to get target

final_phase.target.sum()

# confirm that value matches the target label for this process_id

train_labels.loc[20017]

Pre-process the data¶

Subset the data¶

Before doing some feature engineering, we'll want to subset our training dataset to exclude observations from the final rinse phase. The training data contains all of the available phases for reference, but the test set does not.

As per the problem description, a single object is cleaned in each process and there are five possible phases for each process:

- Pre-rinse phase: Rinse water is pushed into the cleaning object

- Caustic phase: Caustic soda is pushed into the cleaning object

- Intermediate rinse phase: Clean or rinse water is pushed into the object

- Acid phase: Nitric acid is pushed into the cleaning object

- Final rinse phase: Clean water is pushed into the object

The test set does not include any observations from the final rinsing phase, as the goal is to predict final turbidity early enough in advance so the cleaning station operator can adjust the length of the final rinse accordingly. To ensure our model doesn't depend on observations from the final rinse phase, it's important to remove these observations from our training data.

train_values = train_values[train_values.phase != "final_rinse"]

In the train set, you are given all available data for each cleaning process. However, in the test set you are only given data from selected phases (up to a given time, t) and then asked to predict into the future.

- For 10% of the test instances, t corresponds to the end of the first (pre-rinse) phase.

- For 30% of the test instances, t corresponds to the end of the second (caustic) phase.

- For 30% of the test instances, t corresponds to the end of the third (intermediate rinse) phase.

- For 30% of the test instances, t corresponds to the end of the fourth (acid) phase.

To help our train set better match the test set, let's randomly drop out phases from our training set.

train_values.groupby("process_id").phase.nunique().value_counts().sort_index().plot.bar()

plt.title("Number of Processes with $N$ Phases");

# create a unique phase identifier by joining process_id and phase

train_values["process_phase"] = (

train_values.process_id.astype(str) + "_" + train_values.phase.astype(str)

)

process_phases = train_values.process_phase.unique()

# randomly select 80% of phases to keep

rng = np.random.RandomState(2019)

to_keep = rng.choice(

process_phases, size=np.int(len(process_phases) * 0.8), replace=False

)

train_limited = train_values[train_values.process_phase.isin(to_keep)]

# subset labels to match our training data

train_labels = train_labels.loc[train_limited.process_id.unique()]

train_limited.groupby("process_id").phase.nunique().value_counts().sort_index().plot.bar()

plt.title("Number of Processes with $N$ Phases (Subset for Training)");

Feature engineering¶

In train_values.csv, we have time series measurements sampled every 2 seconds, meaning we have many observations for each process. Our target variable is at the process level, so we'll want a feature matrix where each row corresponds to a unique process_id.

Since this is a benchmark, we're only going to use a subset of the variables in the dataset. It's up to you to take advantage of all the information!

First, let's create some features from the metadata about the cleaning processes. We'll create dummy variables for which pipeline the process occurs on and count the number of phases each process has.

def prep_metadata(df):

# select process_id and pipeline

meta = df[["process_id", "pipeline"]].drop_duplicates().set_index("process_id")

# convert categorical pipeline data to dummy variables

meta = pd.get_dummies(meta)

# pipeline L12 not in test data

if "L12" not in meta.columns:

meta["pipeline_L12"] = 0

# calculate number of phases for each process_object

meta["num_phases"] = df.groupby("process_id")["phase"].apply(lambda x: x.nunique())

return meta

# show example for first 5,000 observations

prep_metadata(train_limited.head(5000))

Then, we'll select the float variable measurements and calculate the following summary statistics for each:

- minimum

- maximum

- mean

- standard deviation

- average value of the last five observations

# variables we'll use to create our time series features

ts_cols = [

"process_id",

"supply_flow",

"supply_pressure",

"return_temperature",

"return_conductivity",

"return_turbidity",

"return_flow",

"tank_level_pre_rinse",

"tank_level_caustic",

"tank_level_acid",

"tank_level_clean_water",

"tank_temperature_pre_rinse",

"tank_temperature_caustic",

"tank_temperature_acid",

"tank_concentration_caustic",

"tank_concentration_acid",

]

def prep_time_series_features(df, columns=None):

if columns is None:

columns = df.columns

ts_df = df[ts_cols].set_index("process_id")

# create features: min, max, mean, standard deviation, and mean of the last five observations

ts_features = ts_df.groupby("process_id").agg(

["min", "max", "mean", "std", lambda x: x.tail(5).mean()]

)

return ts_features

# show example for first 5,000 observations

prep_time_series_features(train_limited.head(5000), columns=ts_cols)

Let's write a simple function to aggregate all this feature engineering.

def create_feature_matrix(df):

metadata = prep_metadata(df)

time_series = prep_time_series_features(df)

# join metadata and time series features into a single dataframe

feature_matrix = pd.concat([metadata, time_series], axis=1)

return feature_matrix

train_features = create_feature_matrix(train_limited)

train_features.head()

The error metric¶

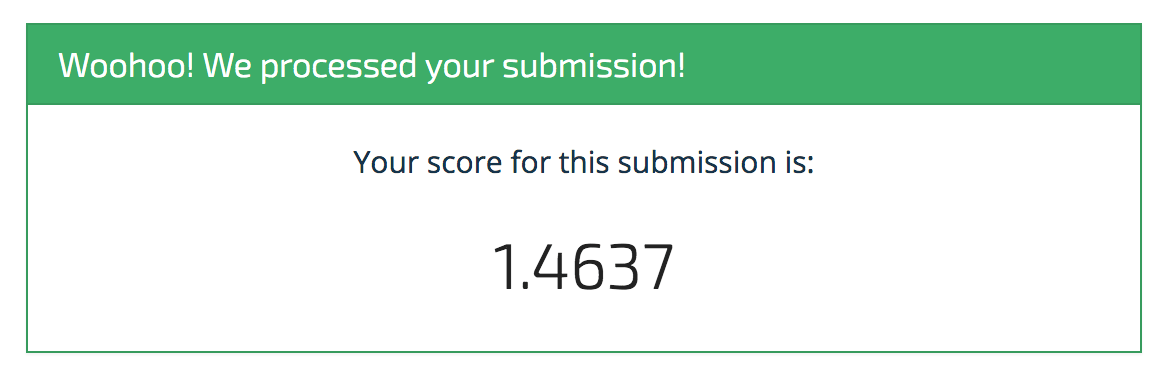

The metric for this competition is mean adjusted absolute error, which captures how much the predicted value of turbidity in the final rinse phase differs from the actual value. These percent differences are then averaged across all cleaning processes to get a final score.

See the competition problem description page for more info on the metric!

Build the model¶

Now that we have our process level features, we're ready to train a model. Random forests are often a good model to try first, especially when we have numeric and categorical variables in our feature space. scikit-learn makes this quick and easy.

%%time

rf = RandomForestRegressor(n_estimators=1000, random_state=2019)

rf.fit(train_features, np.ravel(train_labels))

Time to predict and submit¶

Let's load up the test data, generate our features, and see how well we score on the leaderboard.

# load the test data

test_values = pd.read_csv(

DATA_DIR / "test_values.csv", index_col=0, parse_dates=["timestamp"]

)

# create metadata and time series features

test_features = create_feature_matrix(test_values)

test_features.head()

Make predictions¶

We call the predict method on our model to generate a prediction for each process.

preds = rf.predict(test_features)

Save submission¶

In order for our submission to be evaluated successfully, it needs to exactly match the format of submission_format.csv. We can use the column name and index from the submission format to ensure our predictions are in the correct format.

submission_format = pd.read_csv(DATA_DIR / "submission_format.csv", index_col=0)

# confirm everything is in the right order

assert np.all(test_features.index == submission_format.index)

my_submission = pd.DataFrame(

data=preds, columns=submission_format.columns, index=submission_format.index

)

my_submission.head()

my_submission.to_csv("submission.csv")

Check the head of the saved file.

!head submission.csv

Looks good, let's send it off!

Submit to leaderboard¶

Woohoo! It's a start! And that's exactly what we intend with these benchmarks. We're sure you'll be able to top this model in no time, and we can't wait to see what you come up with. Happy importing!